Freeing the Bird

Musk’s takeover of Twitter and the consequences for monitoring and censorship of social media.

Elon Musk ‘freed the bird’, with the finalisation of the Twitter acquisition on October 27th, but in doing so released a probable onslaught of online hate speech as well. The process started in April 2022, when the Twitter board accepted his offer to buy out the platform for $43 billion. Since the takeover, the subject has generated an incredible amount of interest, of which memes and jokes were plentiful. Although at the moment, much of the commentary on Musk’s takeover is humoristic, it is important to note that this is a very significant change in the social media sphere. Musk is now the single owner of a privatised platform, and is now single-handedly capable of deciding what can and cannot be posted on Twitter. Musk’s own explanation for the purchase is that he wanted to “help humanity” by creating an unmonitored, uncensored platform where free speech is accepted and promoted.

Now that the takeover has actually happened, there has been a great deal of conjecture as to how the platform will change, as Musk has dissolved the Twitter board and has become the sole board member. In his first two weeks as owner of Twitter, his plans for the platform have gone from firing about half the staff, to charging people $8 per month in order to be a verified account, to announcing that parody accounts have to explicitly state that they are parody accounts.

Hate speech and censorship

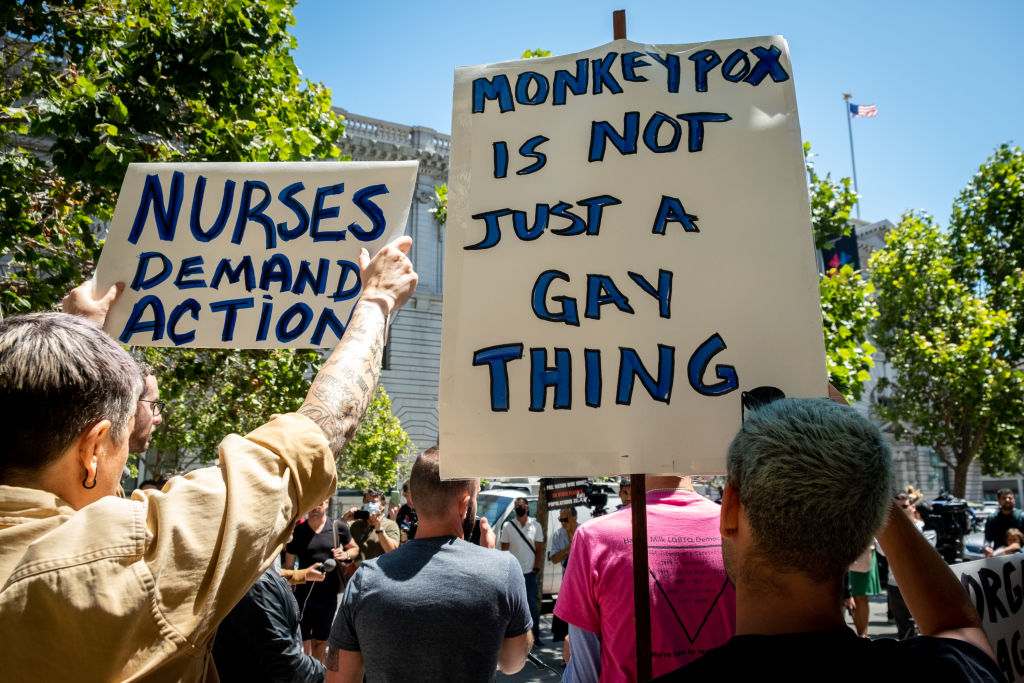

This lack of guideline clarity significantly complicates the conduct of moderation and fact checking of disinformation and online manipulation, especially as Musk has expressed interest in removing censorship overall, which includes censorship against hate speech and racist comments. Researchers found an immediate and significant increase in hate speech after the takeover, which was partly caused by an increase in the amount of hateful content.

Musk did outline a strategy, revealed in a single tweet, that to prevent hate speech and polarisation Twitter would set up an independent council that will decide on content and account reinstatements. However, what this council will concretely look like is yet to be determined. Furthermore, although ~50% of the employees were fired, just 15% amongst those were part of the trust and safety staff, so there is still some focus on moderation.

Information resilience

This lack of clarity significantly complicates the conduct of moderation and fact checking of fake news, disinformation, and online manipulation. Musk’s monopoly on content moderation on Twitter shows that we cannot solely rely on platforms to act against disinformation or hate speech. Instead we should spend resources on strengthening people against the harmful effects of disinformation and online manipulation. One way to do so is to focus on prebunking rather than debunking. In pursuit of stronger information resilience, we have created several tools that help people arm themselves against disinformation. An example is our online Bad News game that focuses on strengthening players’ media literacy, by introducing them to the main tactics in disinformation, and making sure they know how to recognize it when they encounter it online.

We believe that the (online) world should be a safe and inclusive place for everyone, regardless of race, religion, gender, or sexual orientation. That’s why we also aim to enhance online safety by identifying and addressing instances of hate speech on the internet.

At Tilt, we are innovators in information resilience. Our aim is to create projects and products that help arm people against the onslaught of disinformation and online manipulation in the online sphere. Interested in learning more? Reach out to hello@tilstudio.co!

Let’s talk

Let’s talk

"*" indicates required fields